Introduction

User bocorps pointed me in the direction of gst-rpicamsrc. This is “… a GStreamer wrapper around the raspivid/raspistill functionality of the RaspberryPi, providing a GStreamer source element capturing from the Rpi camera.”

What this means is that instead of piping the output of raspivid into gstreamer, gstreamer has a source element to read the camera directly. This is similar to using the video4linux (v4l) source element, but negates the need for a v4l driver.

My hope was that by integrating the camera functionality into a gstreamer source element the latency would be reduced. Unfortunately, I actually saw an 18% increase in latency.

Installation

Before I could use the gst-rpicamsrc element, I needed to download the source and build it. As I was working with a minimal install of Raspbian Jessie, I needed to install the git package before I could do anything else

sudo apt-get install git

With git installed I could download the latest sources for gst-rpicamsrc.

git clone https://github.com/thaytan/gst-rpicamsrc.git

With that done a look in the REQUIREMENTS file indicated what other packages were needed in order to accomplish the build.

sudo apt-get install autoconf automake libtool libgstreamer1.0-dev libgstreamer-plugins-base1.0-dev libraspberrypi-dev

Finally, I was able to complete the build and install.

./autogen --prefix=/usr --libdir=/usr/lib/arm-linux-gnueabihf/

make

sudo make install

The command `gst-inspect-1.0 rpicamsrc’ produces a list of the available parameters.

Factory Details:

Rank none (0)

Long-name Raspberry Pi Camera Source

Klass Source/Video

Description Raspberry Pi camera module source

Author Jan Schmidt <jan@centricular.com>

Plugin Details:

Name rpicamsrc

Description Raspberry Pi Camera Source

Filename /usr/lib/arm-linux-gnueabihf/gstreamer-1.0/libgstrpicamsrc.so

Version 1.0.0

License LGPL

Source module gstrpicamsrc

Binary package GStreamer

Origin URL http://gstreamer.net/

GObject

+----GInitiallyUnowned

+----GstObject

+----GstElement

+----GstBaseSrc

+----GstPushSrc

+----GstRpiCamSrc

Pad Templates:

SRC template: 'src'

Availability: Always

Capabilities:

video/x-h264

width: [ 1, 2147483647 ]

height: [ 1, 2147483647 ]

framerate: [ 0/1, 2147483647/1 ]

stream-format: byte-stream

alignment: au

profile: { baseline, main, high }

Element Flags:

no flags set

Element Implementation:

Has change_state() function: gst_base_src_change_state

Element has no clocking capabilities.

Element has no URI handling capabilities.

Pads:

SRC: 'src'

Implementation:

Has getrangefunc(): gst_base_src_getrange

Has custom eventfunc(): gst_base_src_event

Has custom queryfunc(): gst_base_src_query

Has custom iterintlinkfunc(): gst_pad_iterate_internal_links_default

Pad Template: 'src'

Element Properties:

name : The name of the object

flags: readable, writable

String. Default: "rpicamsrc0"

parent : The parent of the object

flags: readable, writable

Object of type "GstObject"

blocksize : Size in bytes to read per buffer (-1 = default)

flags: readable, writable

Unsigned Integer. Range: 0 - 4294967295 Default: 4096

num-buffers : Number of buffers to output before sending EOS (-1 = unlimited)

flags: readable, writable

Integer. Range: -1 - 2147483647 Default: -1

typefind : Run typefind before negotiating

flags: readable, writable

Boolean. Default: false

do-timestamp : Apply current stream time to buffers

flags: readable, writable

Boolean. Default: true

bitrate : Bitrate for encoding

flags: readable, writable

Integer. Range: 1 - 25000000 Default: 17000000

preview : Display preview window overlay

flags: readable, writable

Boolean. Default: true

preview-encoded : Display encoder output in the preview

flags: readable, writable

Boolean. Default: true

preview-opacity : Opacity to use for the preview window

flags: readable, writable

Integer. Range: 0 - 255 Default: 255

fullscreen : Display preview window full screen

flags: readable, writable

Boolean. Default: true

sharpness : Image capture sharpness

flags: readable, writable

Integer. Range: -100 - 100 Default: 0

contrast : Image capture contrast

flags: readable, writable

Integer. Range: -100 - 100 Default: 0

brightness : Image capture brightness

flags: readable, writable

Integer. Range: 0 - 100 Default: 50

saturation : Image capture saturation

flags: readable, writable

Integer. Range: -100 - 100 Default: 0

iso : ISO value to use (0 = Auto)

flags: readable, writable

Integer. Range: 0 - 3200 Default: 0

video-stabilisation : Enable or disable video stabilisation

flags: readable, writable

Boolean. Default: false

exposure-compensation: Exposure Value compensation

flags: readable, writable

Integer. Range: -10 - 10 Default: 0

exposure-mode : Camera exposure mode to use

flags: readable, writable

Enum "GstRpiCamSrcExposureMode" Default: 1, "auto"

(0): off - GST_RPI_CAM_SRC_EXPOSURE_MODE_OFF

(1): auto - GST_RPI_CAM_SRC_EXPOSURE_MODE_AUTO

(2): night - GST_RPI_CAM_SRC_EXPOSURE_MODE_NIGHT

(3): nightpreview - GST_RPI_CAM_SRC_EXPOSURE_MODE_NIGHTPREVIEW

(4): backlight - GST_RPI_CAM_SRC_EXPOSURE_MODE_BACKLIGHT

(5): spotlight - GST_RPI_CAM_SRC_EXPOSURE_MODE_SPOTLIGHT

(6): sports - GST_RPI_CAM_SRC_EXPOSURE_MODE_SPORTS

(7): snow - GST_RPI_CAM_SRC_EXPOSURE_MODE_SNOW

(8): beach - GST_RPI_CAM_SRC_EXPOSURE_MODE_BEACH

(9): verylong - GST_RPI_CAM_SRC_EXPOSURE_MODE_VERYLONG

(10): fixedfps - GST_RPI_CAM_SRC_EXPOSURE_MODE_FIXEDFPS

(11): antishake - GST_RPI_CAM_SRC_EXPOSURE_MODE_ANTISHAKE

(12): fireworks - GST_RPI_CAM_SRC_EXPOSURE_MODE_FIREWORKS

metering-mode : Camera exposure metering mode to use

flags: readable, writable

Enum "GstRpiCamSrcExposureMeteringMode" Default: 0, "average"

(0): average - GST_RPI_CAM_SRC_EXPOSURE_METERING_MODE_AVERAGE

(1): spot - GST_RPI_CAM_SRC_EXPOSURE_METERING_MODE_SPOT

(2): backlist - GST_RPI_CAM_SRC_EXPOSURE_METERING_MODE_BACKLIST

(3): matrix - GST_RPI_CAM_SRC_EXPOSURE_METERING_MODE_MATRIX

awb-mode : White Balance mode

flags: readable, writable

Enum "GstRpiCamSrcAWBMode" Default: 1, "auto"

(0): off - GST_RPI_CAM_SRC_AWB_MODE_OFF

(1): auto - GST_RPI_CAM_SRC_AWB_MODE_AUTO

(2): sunlight - GST_RPI_CAM_SRC_AWB_MODE_SUNLIGHT

(3): cloudy - GST_RPI_CAM_SRC_AWB_MODE_CLOUDY

(4): shade - GST_RPI_CAM_SRC_AWB_MODE_SHADE

(5): tungsten - GST_RPI_CAM_SRC_AWB_MODE_TUNGSTEN

(6): fluorescent - GST_RPI_CAM_SRC_AWB_MODE_FLUORESCENT

(7): incandescent - GST_RPI_CAM_SRC_AWB_MODE_INCANDESCENT

(8): flash - GST_RPI_CAM_SRC_AWB_MODE_FLASH

(9): horizon - GST_RPI_CAM_SRC_AWB_MODE_HORIZON

image-effect : Visual FX to apply to the image

flags: readable, writable

Enum "GstRpiCamSrcImageEffect" Default: 0, "none"

(0): none - GST_RPI_CAM_SRC_IMAGEFX_NONE

(1): negative - GST_RPI_CAM_SRC_IMAGEFX_NEGATIVE

(2): solarize - GST_RPI_CAM_SRC_IMAGEFX_SOLARIZE

(3): posterize - GST_RPI_CAM_SRC_IMAGEFX_POSTERIZE

(4): whiteboard - GST_RPI_CAM_SRC_IMAGEFX_WHITEBOARD

(5): blackboard - GST_RPI_CAM_SRC_IMAGEFX_BLACKBOARD

(6): sketch - GST_RPI_CAM_SRC_IMAGEFX_SKETCH

(7): denoise - GST_RPI_CAM_SRC_IMAGEFX_DENOISE

(8): emboss - GST_RPI_CAM_SRC_IMAGEFX_EMBOSS

(9): oilpaint - GST_RPI_CAM_SRC_IMAGEFX_OILPAINT

(10): hatch - GST_RPI_CAM_SRC_IMAGEFX_HATCH

(11): gpen - GST_RPI_CAM_SRC_IMAGEFX_GPEN

(12): pastel - GST_RPI_CAM_SRC_IMAGEFX_PASTEL

(13): watercolour - GST_RPI_CAM_SRC_IMAGEFX_WATERCOLOUR

(14): film - GST_RPI_CAM_SRC_IMAGEFX_FILM

(15): blur - GST_RPI_CAM_SRC_IMAGEFX_BLUR

(16): saturation - GST_RPI_CAM_SRC_IMAGEFX_SATURATION

(17): colourswap - GST_RPI_CAM_SRC_IMAGEFX_COLOURSWAP

(18): washedout - GST_RPI_CAM_SRC_IMAGEFX_WASHEDOUT

(19): posterise - GST_RPI_CAM_SRC_IMAGEFX_POSTERISE

(20): colourpoint - GST_RPI_CAM_SRC_IMAGEFX_COLOURPOINT

(21): colourbalance - GST_RPI_CAM_SRC_IMAGEFX_COLOURBALANCE

(22): cartoon - GST_RPI_CAM_SRC_IMAGEFX_CARTOON

rotation : Rotate captured image (0, 90, 180, 270 degrees)

flags: readable, writable

Integer. Range: 0 - 270 Default: 0

hflip : Flip capture horizontally

flags: readable, writable

Boolean. Default: false

vflip : Flip capture vertically

flags: readable, writable

Boolean. Default: false

roi-x : Normalised region-of-interest X coord

flags: readable, writable

Float. Range: 0 - 1 Default: 0

roi-y : Normalised region-of-interest Y coord

flags: readable, writable

Float. Range: 0 - 1 Default: 0

roi-w : Normalised region-of-interest W coord

flags: readable, writable

Float. Range: 0 - 1 Default: 1

roi-h : Normalised region-of-interest H coord

flags: readable, writable

Float. Range: 0 - 1 Default: 1

Usage

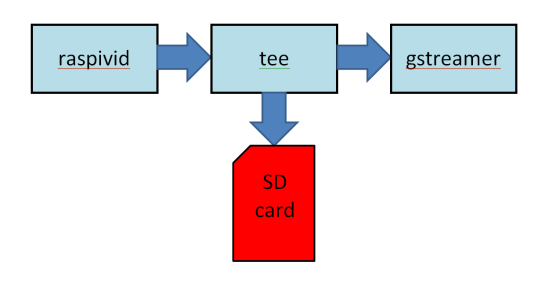

Because of the way gstreamer works, the parameters for the feed needed to be split and re-arranged in the streamer pipeline. Previously all the parameters are specified as part of raspivid.

/opt/vc/bin/raspivid -t $DURATION -w $WIDTH -h $HEIGHT -fps $FRAMERATE -b $BITRATE -n -pf high -o - | gst-launch-1.0 -v fdsrc !

GStreamer parameters like width, height and frame rate are configured through capabilities (caps) negotiation with the next element. Other parameters like the bit rate and preview screen are controlled as part of the source element.

gst-launch-1.0 rpicamsrc bitrate=$BITRATE preview=0 ! video/x-h264,width=$WIDTH,height=$HEIGHT,framerate=$FRAMERATE/1 !

The new stream script is

#!/bin/bash

source remote.conf

if [ "$1" != "" ]

then

export FRAMERATE=$1

fi

NOW=`date +%Y%m%d%H%M%S`

FILENAME=$NOW-Tx.h264

gst-launch-1.0 rpicamsrc bitrate=$BITRATE preview=0 ! video/x-h264,width=$WIDTH,height=$HEIGHT,framereate=$FRAMERATE/1,profile=high ! h264parse ! rtph264pay config-interval=1 pt=96 ! udpsink host=$RX_IP port=$UDPPORT

Tests

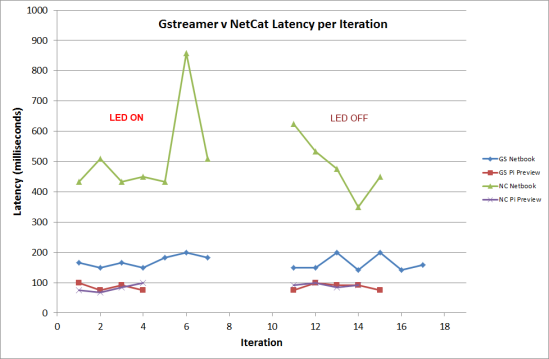

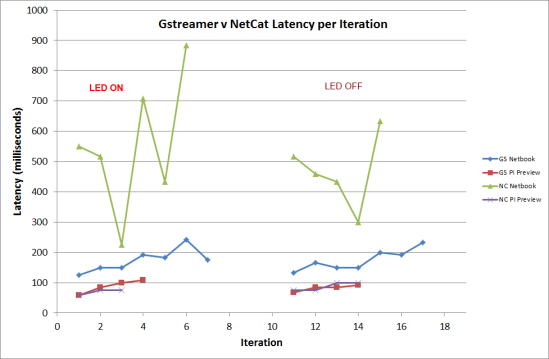

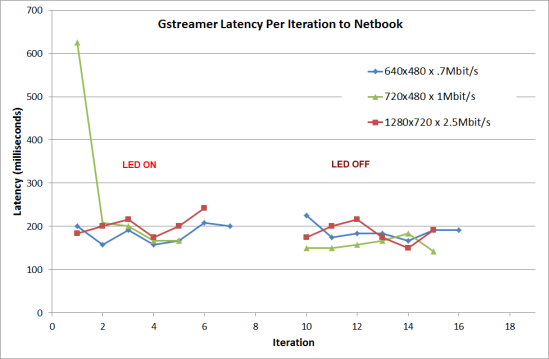

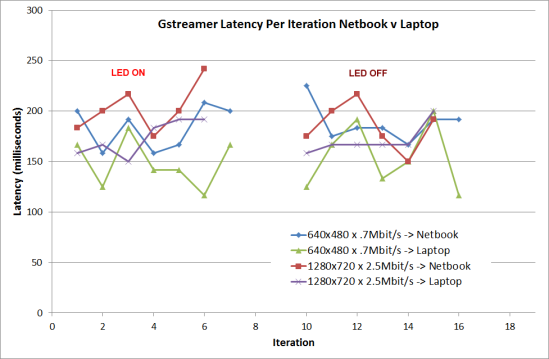

This test was a straight comparison between the old and new scripts using the same settings and the same testing methodology as previously. The resolution was 1280 x 720 pixels with a 6Mbps bitrate.

Update : Since the original article was published use Jan Schmitt spotted that I had misspelled “framerate” as “framereate” in the gst-rpicamsrc script. He also suggested I should try using the baseline profile and a queue element to decouple the video capture from the UDP transmission. With this in mind I have re-run the tests.

Results

Almost immediately I had the feeling that gst-rpicamsrc has slower. Analysis of the video showed I was correct. The latency using gst-rpicamsrc was 18% higher than using raspivid.

Update:

Running the original erroneous script with debugging on showed that the capture was running at 30fps instead of the intended 48 fps. Here are the new results averaged from 10 cycles.

| Script |

gst-rpicamsrc @ 48 fps

|

raspivid @ 48 fps

|

| Profile |

No queue |

With queue |

No queue |

With queue |

| baseline |

184.2 |

175.4 |

153.9 |

156.9 |

| high |

186.2 |

185 |

154.1 |

159.7 |

gst-rpicamsrc @ 30 fps, high profile, no queue = 198.2 ms

Analysis

The first thing to note is that the raspivid latency (no queue, high profile) has risen from the 126ms found in the last tests to 154ms. The only difference was that I cloned the Sandisk microSDHC card onto a Transcend 8GB. I’ll set up some more tests to compare the cards. As these tests were run from the same card and from the same boot, they are still valid for comparison.

It is immediately obvious that the gst-rpicamsrc latency is about 20% higher than the raspivid script, so the conclusion from the first publish of this article still stands.

What can be added is that using the baseline profile, does reduce the latency a little: 1 to 3ms in most cases.

Adding a queue element does provide a benefit for the gst-rpicamsrc script, especially with the baseline profile where a 9ms reduction in latency was observed. For the raspivid script adding a queue element actually increased the latency by 3 to 4ms. I suspect this is because the video stream is already decoupled from gstreamer by being piped in from an external process.

Conclusion

Using gst-rpicamsrc provides no benefit for reducing latency over raspivid. That is not to say gst-rpicamsrc provides no other benefits. For any use other than FPV, I would definitely use gst-rpicamsrc instead of having to pipe the video in through stdin. It provides plenty of options for setting up the video stream as the command `gst-inspect-1.0 rpicamsrc’ above showed.

The problem here is that I am targeting this development for FPV use where low latency is the driving factor. At the moment my lowest latency for a adequate quality HD stream is 125ms and I really need to get this under 100ms to compete with current analog standard definition systems. Whether it is possible to shave of another 25ms remains to be seen.

Update: Following the additional tests I would add that it is better to use the baseline profile over the high profile.

You must be logged in to post a comment.